Here’s a new term to thing about “machine ethics” and this is long and detailed abstract discussing the distinct possibility of the existence. Ethics are really a “human” thing and not “machine” inherent no matter how hard you try to build it. Just the existence of a “machine ethicist” as discussed here is something that would send people’s heads spinning..i.e. “how do I do that” in plain terms. If there is no “machine ethicist” taking care of such issues, then as I write about with a more day to day layman description, “Algo Duping and the Attacks of the Killer Algorithms” do exist and happen every day, not limited to markets at all. It happens everywhere around you with machines running 24/7 making life impacting decisions about all of us, and yes there’s no “machine ethicist” but rather only models that yield profit. Here’s the conclusion of the abstract…the last line. Technology and behavior is not going to allow machine ethics at all.

not going to allow machine ethics at all.

“Finally, given the diversity of AI systems today and for the foreseeable future and the deep dependence of ethical behavior on context, there appears to be no hope of machine ethics building on an existing technical core.”

To put it another way if you are expecting machine learning to inherit some “human” ethics, well that’s down the drain and no matter how close they get to having robots and computers to work and act like humans, you’re not going to find any trace of human ethics. There’s a lot of businesses chasing this idea and even to the point to where Google itself is doing a study to figure out “how people work” and that’s pretty amazing venture in itself when you figure over a number of years they have hired thousands of people and are at this cross road now?

In summary we are not going to get positive social outcomes from intelligent systems, as “people don’t work that way” and I say that a lot but it is true. Sure there’s a lot of neat software that entertains us and makes us more productive that’s the stuff we like, but there’s the other side of using modeling to deny access and care and that part is accelerating. I just read where Wendell Potter has already come out and said “be ready for more claim denials” which will occur as insurers change their risk models, they model 24/7 and the government can’t get their heads around this and we suffer and we end up with quantitated justification for things that are just not true, or get “Algo Duped”. You can read below a prior post on how the insurers “changed the model” to keep risk assessments and profits in line with their current goals and again, machine ethics..heck no..just money. So when machines are running things, as programmed by humans, well there’s no ethics at all and you end up with being told “it’s a business decision”, but that “business decision” might your life in healthcare analytics as well.

Pre-existing Conditions With Health Insurance May Be Gone But Narrow Networks Are Providing The Same End Result For Many Ill Patients With Not Being Able To Get Care - Extreme Cases Of New Killer Algorithms Popping Up With Insurance Business Models…

I have my little project called the “Attack of the Killer Algorithms” page to where I curate videos and links with using valuable information from people smarter than me and try to share where some of the knowledge comes from. Myself being extremely logical can see the immediate fit having been a former programmer too and I realize it’s a hard nut to swallow that is is really happening and I don’t like it myself, so I keep trying to share the logic and the knowledge of others and it’s stinky uncomfortable topic too.

You have people that dance around the topic in the same way who are trying to bring this awareness around like Bill Moyer, Robert Reich, Michael Moore and the list goes on but they can’t seem to get to the raw level here and acknowledge the bottom cause either. After Michael Moore made his movie “Capitalism” it was in the news he was mad or frustrated I should say with the American public. Why?

who are trying to bring this awareness around like Bill Moyer, Robert Reich, Michael Moore and the list goes on but they can’t seem to get to the raw level here and acknowledge the bottom cause either. After Michael Moore made his movie “Capitalism” it was in the news he was mad or frustrated I should say with the American public. Why?

He did a treat job with the movie and with “Sicko” for that matter but again it had no message at the end as to a suggested role of action so sure people got mad, upset and then it all died as there was no message on how to counteract what was going on and again we come right back to the machines and those who load the code and algorithms that run them and they know very well what they are doing as it makes money. Subprime could not have occurred without this machine manipulations and even Madoff knew how to build a fake front of such.

So in summary take a look and read the abstract and then visit the footer of this blog for a few videos that will help bring you up to speed and then there’s the Attack of the Killer Algorithms/Algo Duping page. You cannot sit there and take in every video and link here at one time either unless you decide to make a day of it so watch and read and then come back.

Attack of the Killer Algorithms – “Algo Duping 101″

Read also what the World Privacy Organization is saying about “scoring” in the US..all eyes are on us as this too add to inequality by denying access and rich wealthier. Again no ethics here at all with the computer code and algorithms.

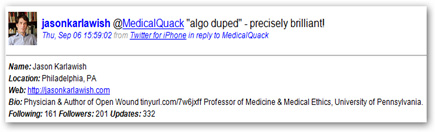

Here’s another good person to follow on Twitter(@FrankPasquale) and actually I have to credit finding this abstract to Frank Pasquale and find my thinking aligned in the same direction as his, “Algorithms Behind Money and Information” and he has book coming later this year on the topic. Be sure not to ignore the word “behind” here as that is what you “don’t get to see”. Maybe I should get one going soon too (grin). BD

“Finally, the (possible) existence of genuine moral dilemmas is problematic for the machine ethicist.

This section will survey some of the literature in normative ethics and moral psychology which suggest that morality does not lend itself to an algorithmic solution.

Given an arbitrary ethical theory or utility function, a machine (like a human) will be limited in its ability to act successfully based on it in complex environments.

Indeed, given the potential for ethical ‘black swans’ arising from complex social system dynamics, it will not always be clear how a RUB system would act in the real world.

Thus, even if machines act in ethical fashions in their prescribed domains, the overall impact may not be broadly beneficial, particularly if control of AGI technologies is concentrated in already powerful groups such as the militaries or large corporations that are not necessarily incentivized to diffuse the benefits of these technologies.”

Many authors have proposed constraining the behavior of intelligent systems with ‘machine ethics’ to ensure positive social outcomes from the development of such systems. This paper critically analyses the prospects for machine ethics, identifying several inherent limitations. While machine ethics may increase the probability of ethical behavior in some situations, it cannot guarantee it due to the nature of ethics, the computational limitations of computational agents and the complexity of the world. In addition, machine ethics, even if it were to be ‘solved’ at a technical level, would be insufficient to ensure positive social outcomes from intelligent systems.

http://www.tandfonline.com/doi/full/10.1080/0952813X.2014.895108#tabModule

0 comments :

Post a Comment